mirror of

https://github.com/lucaspalomodevelop/indico-plugins.git

synced 2026-03-12 23:27:22 +00:00

Prometheus: Initial commit (#201)

This commit is contained in:

parent

6c3c1a2f1d

commit

7149c38e50

3

.github/workflows/ci.yml

vendored

3

.github/workflows/ci.yml

vendored

@ -195,6 +195,7 @@ jobs:

|

||||

- plugin: payment_paypal

|

||||

- plugin: storage_s3

|

||||

- plugin: vc_zoom

|

||||

- plugin: prometheus

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

@ -224,7 +225,7 @@ jobs:

|

||||

echo "$(pwd)/.venv/bin" >> $GITHUB_PATH

|

||||

|

||||

- name: Install extra dependencies

|

||||

if: matrix.plugin == 'citadel'

|

||||

if: matrix.plugin == 'citadel' || matrix.plugin == 'prometheus'

|

||||

run: |

|

||||

pip install -e "${GITHUB_WORKSPACE}/livesync/"

|

||||

|

||||

|

||||

32

prometheus/README.md

Normal file

32

prometheus/README.md

Normal file

@ -0,0 +1,32 @@

|

||||

# Indico Prometheus Plugin

|

||||

|

||||

This plugin exposes a `/metrics` endpoint which provides Prometheus-compatible output.

|

||||

|

||||

|

||||

|

||||

## prometheus.yml

|

||||

```yaml

|

||||

scrape_configs:

|

||||

- job_name: indico_stats

|

||||

metrics_path: /metrics

|

||||

scheme: https

|

||||

static_configs:

|

||||

- targets:

|

||||

- yourindicoserver.example.com

|

||||

# it is recommended that you set a bearer token in the config

|

||||

authorization:

|

||||

credentials: xxxxxx

|

||||

# this is only needed in development setups

|

||||

```

|

||||

|

||||

If you're doing development you may want to add this under `scrape_configs`:

|

||||

```yaml

|

||||

tls_config:

|

||||

insecure_skip_verify: false

|

||||

```

|

||||

|

||||

## Changelog

|

||||

|

||||

### 3.2

|

||||

|

||||

- Initial release for Indico 3.2

|

||||

11

prometheus/indico_prometheus/__init__.py

Normal file

11

prometheus/indico_prometheus/__init__.py

Normal file

@ -0,0 +1,11 @@

|

||||

# This file is part of the Indico plugins.

|

||||

# Copyright (C) 2002 - 2023 CERN

|

||||

#

|

||||

# The Indico plugins are free software; you can redistribute

|

||||

# them and/or modify them under the terms of the MIT License;

|

||||

# see the LICENSE file for more details.

|

||||

|

||||

from indico.util.i18n import make_bound_gettext

|

||||

|

||||

|

||||

_ = make_bound_gettext('prometheus')

|

||||

15

prometheus/indico_prometheus/blueprint.py

Normal file

15

prometheus/indico_prometheus/blueprint.py

Normal file

@ -0,0 +1,15 @@

|

||||

# This file is part of the Indico plugins.

|

||||

# Copyright (C) 2002 - 2023 CERN

|

||||

#

|

||||

# The Indico plugins are free software; you can redistribute

|

||||

# them and/or modify them under the terms of the MIT License;

|

||||

# see the LICENSE file for more details.

|

||||

|

||||

from indico.core.plugins import IndicoPluginBlueprint

|

||||

|

||||

from indico_prometheus.controllers import RHMetrics

|

||||

|

||||

|

||||

blueprint = IndicoPluginBlueprint('prometheus', __name__)

|

||||

|

||||

blueprint.add_url_rule('/metrics', 'metrics', RHMetrics)

|

||||

56

prometheus/indico_prometheus/controllers.py

Normal file

56

prometheus/indico_prometheus/controllers.py

Normal file

@ -0,0 +1,56 @@

|

||||

# This file is part of the Indico plugins.

|

||||

# Copyright (C) 2002 - 2023 CERN

|

||||

#

|

||||

# The Indico plugins are free software; you can redistribute

|

||||

# them and/or modify them under the terms of the MIT License;

|

||||

# see the LICENSE file for more details.

|

||||

|

||||

from flask import make_response, request

|

||||

from flask_pluginengine import current_plugin

|

||||

from prometheus_client.exposition import _bake_output

|

||||

from prometheus_client.registry import REGISTRY

|

||||

from werkzeug.exceptions import ServiceUnavailable, Unauthorized

|

||||

|

||||

from indico.core.cache import make_scoped_cache

|

||||

from indico.web.rh import RH, custom_auth

|

||||

|

||||

from indico_prometheus.metrics import update_metrics

|

||||

|

||||

|

||||

cache = make_scoped_cache('prometheus_metrics')

|

||||

|

||||

|

||||

@custom_auth

|

||||

class RHMetrics(RH):

|

||||

def _check_access(self):

|

||||

if not current_plugin.settings.get('enabled'):

|

||||

raise ServiceUnavailable

|

||||

token = current_plugin.settings.get('token')

|

||||

if token and token != request.bearer_token:

|

||||

raise Unauthorized

|

||||

|

||||

def _process(self):

|

||||

accept_header = request.headers.get('Accept')

|

||||

accept_encoding_header = request.headers.get('Accept-Encoding')

|

||||

metrics = cache.get('metrics')

|

||||

|

||||

cached = False

|

||||

if metrics:

|

||||

cached = True

|

||||

status, headers, output = metrics

|

||||

else:

|

||||

update_metrics(

|

||||

current_plugin.settings.get('active_user_age'), cache, current_plugin.settings.get('heavy_cache_ttl')

|

||||

)

|

||||

status, headers, output = _bake_output(

|

||||

REGISTRY, accept_header, accept_encoding_header, request.args, False

|

||||

)

|

||||

cache.set('metrics', (status, headers, output), timeout=current_plugin.settings.get('global_cache_ttl'))

|

||||

|

||||

resp = make_response(output)

|

||||

resp.status = status

|

||||

|

||||

resp.headers['X-Cached'] = 'yes' if cached else 'no'

|

||||

resp.headers.extend(headers)

|

||||

|

||||

return resp

|

||||

179

prometheus/indico_prometheus/metrics.py

Normal file

179

prometheus/indico_prometheus/metrics.py

Normal file

@ -0,0 +1,179 @@

|

||||

# This file is part of the Indico plugins.

|

||||

# Copyright (C) 2002 - 2023 CERN

|

||||

#

|

||||

# The Indico plugins are free software; you can redistribute

|

||||

# them and/or modify them under the terms of the MIT License;

|

||||

# see the LICENSE file for more details.

|

||||

|

||||

from datetime import timedelta

|

||||

|

||||

from prometheus_client import metrics

|

||||

|

||||

from indico.core.cache import ScopedCache

|

||||

from indico.core.db import db

|

||||

from indico.core.db.sqlalchemy.protection import ProtectionMode

|

||||

from indico.modules.attachments.models.attachments import Attachment, AttachmentFile

|

||||

from indico.modules.auth.models.identities import Identity

|

||||

from indico.modules.categories.models.categories import Category

|

||||

from indico.modules.events.models.events import Event

|

||||

from indico.modules.rb.models.reservation_occurrences import ReservationOccurrence

|

||||

from indico.modules.rb.models.reservations import Reservation

|

||||

from indico.modules.rb.models.rooms import Room

|

||||

from indico.modules.users.models.users import User

|

||||

from indico.util.date_time import now_utc

|

||||

|

||||

from indico_prometheus.queries import get_attachment_query, get_note_query

|

||||

|

||||

|

||||

# Check for availability of the LiveSync plugin

|

||||

LIVESYNC_AVAILABLE = True

|

||||

try:

|

||||

from indico_livesync.models.queue import LiveSyncQueueEntry

|

||||

except ImportError:

|

||||

LIVESYNC_AVAILABLE = False

|

||||

|

||||

|

||||

num_active_events = metrics.Gauge('indico_num_active_events', 'Number of Active Events')

|

||||

num_events = metrics.Gauge('indico_num_events', 'Number of Events')

|

||||

|

||||

num_active_users = metrics.Gauge('indico_num_active_users', 'Number of Active Users (logged in in the last 24h)')

|

||||

num_users = metrics.Gauge('indico_num_users', 'Number of Users')

|

||||

|

||||

num_categories = metrics.Gauge('indico_num_categories', 'Number of Categories')

|

||||

|

||||

num_active_attachment_files = metrics.Gauge('indico_num_active_attachment_files', 'Number of attachment files')

|

||||

num_attachment_files = metrics.Gauge(

|

||||

'indico_num_attachment_files',

|

||||

'Total number of attachment files, including older versions / deleted'

|

||||

)

|

||||

|

||||

size_active_attachment_files = metrics.Gauge(

|

||||

'indico_size_active_attachment_files',

|

||||

'Total size of all active attachment files (bytes)'

|

||||

)

|

||||

size_attachment_files = metrics.Gauge(

|

||||

'indico_size_attachment_files',

|

||||

'Total size of all attachment files, including older versions / deleted (bytes)'

|

||||

)

|

||||

|

||||

num_notes = metrics.Gauge('indico_num_notes', 'Number of notes')

|

||||

|

||||

num_active_rooms = metrics.Gauge('indico_num_active_rooms', 'Number of active rooms')

|

||||

num_rooms = metrics.Gauge('indico_num_rooms', 'Number of rooms')

|

||||

num_restricted_rooms = metrics.Gauge('indico_num_restricted_rooms', 'Number of restricted rooms')

|

||||

num_rooms_with_confirmation = metrics.Gauge(

|

||||

'indico_num_rooms_with_confirmation',

|

||||

'Number or rooms requiring manual confirmation'

|

||||

)

|

||||

|

||||

num_bookings = metrics.Gauge('indico_num_bookings', 'Number of bookings')

|

||||

num_valid_bookings = metrics.Gauge('indico_num_valid_bookings', 'Number of valid bookings')

|

||||

num_pending_bookings = metrics.Gauge('indico_num_pending_bookings', 'Number of pending bookings')

|

||||

|

||||

num_occurrences = metrics.Gauge('indico_num_booking_occurrences', 'Number of occurrences')

|

||||

num_valid_occurrences = metrics.Gauge('indico_num_valid_booking_occurrences', 'Number of valid occurrences')

|

||||

|

||||

num_ongoing_occurrences = metrics.Gauge('indico_num_ongoing_booking_occurrences', 'Number of ongoing bookings')

|

||||

|

||||

if LIVESYNC_AVAILABLE:

|

||||

size_livesync_queues = metrics.Gauge('indico_size_livesync_queues', 'Items in Livesync queues')

|

||||

num_livesync_events_category_changes = metrics.Gauge(

|

||||

'indico_num_livesync_events_category_changes',

|

||||

'Number of event updates due to category changes queued up in Livesync'

|

||||

)

|

||||

|

||||

|

||||

def get_attachment_stats():

|

||||

attachment_subq = db.aliased(Attachment, get_attachment_query().subquery('attachment'))

|

||||

|

||||

return {

|

||||

'num_active': get_attachment_query().count(),

|

||||

'num_total': AttachmentFile.query.join(Attachment, AttachmentFile.attachment_id == Attachment.id).count(),

|

||||

'size_active': (

|

||||

db.session.query(db.func.sum(AttachmentFile.size))

|

||||

.filter(AttachmentFile.id == attachment_subq.file_id)

|

||||

.scalar() or 0

|

||||

),

|

||||

'size_total': (

|

||||

db.session.query(db.func.sum(AttachmentFile.size))

|

||||

.join(Attachment, AttachmentFile.attachment_id == Attachment.id)

|

||||

.scalar() or 0

|

||||

)

|

||||

}

|

||||

|

||||

|

||||

def update_metrics(active_user_age: timedelta, cache: ScopedCache, heavy_cache_ttl: timedelta):

|

||||

"""Update all metrics."""

|

||||

now = now_utc()

|

||||

num_events.set(Event.query.filter(~Event.is_deleted).count())

|

||||

num_active_events.set(Event.query.filter(~Event.is_deleted, Event.start_dt <= now, Event.end_dt >= now).count())

|

||||

num_users.set(User.query.filter(~User.is_deleted).count())

|

||||

num_active_users.set(

|

||||

User.query

|

||||

.filter(Identity.last_login_dt > (now - active_user_age))

|

||||

.join(Identity).group_by(User).count()

|

||||

)

|

||||

num_categories.set(Category.query.filter(~Category.is_deleted).count())

|

||||

|

||||

attachment_stats = cache.get('metrics_heavy')

|

||||

if not attachment_stats:

|

||||

attachment_stats = get_attachment_stats()

|

||||

cache.set('metrics_heavy', attachment_stats, timeout=heavy_cache_ttl)

|

||||

|

||||

num_active_attachment_files.set(attachment_stats['num_active'])

|

||||

num_attachment_files.set(attachment_stats['num_total'])

|

||||

|

||||

size_active_attachment_files.set(attachment_stats['size_active'])

|

||||

size_attachment_files.set(attachment_stats['size_total'])

|

||||

|

||||

if LIVESYNC_AVAILABLE:

|

||||

size_livesync_queues.set(LiveSyncQueueEntry.query.filter(~LiveSyncQueueEntry.processed).count())

|

||||

num_livesync_events_category_changes.set(

|

||||

db.session.query(db.func.sum(Category.deep_events_count))

|

||||

.join(LiveSyncQueueEntry)

|

||||

.filter(~LiveSyncQueueEntry.processed, LiveSyncQueueEntry.type == 1)

|

||||

.scalar() or 0

|

||||

)

|

||||

|

||||

num_notes.set(get_note_query().count())

|

||||

|

||||

num_rooms.set(Room.query.filter(~Room.is_deleted).count())

|

||||

num_active_rooms.set(Room.query.filter(~Room.is_deleted, Room.is_reservable).count())

|

||||

num_restricted_rooms.set(

|

||||

Room.query.filter(~Room.is_deleted, Room.protection_mode == ProtectionMode.protected).count()

|

||||

)

|

||||

num_rooms_with_confirmation.set(Room.query.filter(~Room.is_deleted, Room.reservations_need_confirmation).count())

|

||||

|

||||

num_bookings.set(Reservation.query.filter(~Room.is_deleted).join(Room).count())

|

||||

num_valid_bookings.set(Reservation.query.filter(~Room.is_deleted, ~Reservation.is_rejected).join(Room).count())

|

||||

num_pending_bookings.set(

|

||||

Reservation.query.filter(

|

||||

~Room.is_deleted,

|

||||

Reservation.is_pending,

|

||||

~Reservation.is_archived

|

||||

).join(Room).count()

|

||||

)

|

||||

|

||||

num_occurrences.set(ReservationOccurrence.query.count())

|

||||

num_valid_occurrences.set(

|

||||

ReservationOccurrence

|

||||

.query

|

||||

.filter(~Room.is_deleted, Reservation.is_accepted, ReservationOccurrence.is_valid)

|

||||

.join(Reservation)

|

||||

.join(Room)

|

||||

.count()

|

||||

)

|

||||

|

||||

num_ongoing_occurrences.set(

|

||||

ReservationOccurrence

|

||||

.query

|

||||

.filter(

|

||||

~Room.is_deleted,

|

||||

Reservation.is_accepted,

|

||||

ReservationOccurrence.is_valid,

|

||||

ReservationOccurrence.start_dt < now,

|

||||

ReservationOccurrence.end_dt > now

|

||||

).join(Reservation)

|

||||

.join(Room)

|

||||

.count()

|

||||

)

|

||||

77

prometheus/indico_prometheus/plugin.py

Normal file

77

prometheus/indico_prometheus/plugin.py

Normal file

@ -0,0 +1,77 @@

|

||||

# This file is part of the Indico plugins.

|

||||

# Copyright (C) 2002 - 2023 CERN

|

||||

#

|

||||

# The Indico plugins are free software; you can redistribute

|

||||

# them and/or modify them under the terms of the MIT License;

|

||||

# see the LICENSE file for more details.

|

||||

|

||||

from datetime import timedelta

|

||||

|

||||

from wtforms.fields import BooleanField

|

||||

from wtforms.validators import DataRequired, Optional

|

||||

|

||||

from indico.core.plugins import IndicoPlugin

|

||||

from indico.core.settings.converters import TimedeltaConverter

|

||||

from indico.web.forms.base import IndicoForm

|

||||

from indico.web.forms.fields import IndicoPasswordField, TimeDeltaField

|

||||

from indico.web.forms.widgets import SwitchWidget

|

||||

|

||||

from indico_prometheus import _

|

||||

from indico_prometheus.blueprint import blueprint

|

||||

|

||||

|

||||

class PluginSettingsForm(IndicoForm):

|

||||

enabled = BooleanField(

|

||||

_("Enabled"), [DataRequired()],

|

||||

description=_("Endpoint enabled. Turn this on once you set a proper bearer token."),

|

||||

widget=SwitchWidget()

|

||||

)

|

||||

global_cache_ttl = TimeDeltaField(

|

||||

_('Global Cache TTL'),

|

||||

[DataRequired()],

|

||||

description=_('TTL for "global" cache (everything)'),

|

||||

units=('seconds', 'minutes', 'hours')

|

||||

)

|

||||

heavy_cache_ttl = TimeDeltaField(

|

||||

_('Heavy Cache TTL'),

|

||||

[DataRequired()],

|

||||

description=_('TTL for "heavy" cache (more expensive queries such as attachments)'),

|

||||

units=('seconds', 'minutes', 'hours')

|

||||

)

|

||||

token = IndicoPasswordField(

|

||||

_('Bearer Token'),

|

||||

[Optional()],

|

||||

toggle=True,

|

||||

description=_('Authentication bearer token for Prometheus')

|

||||

)

|

||||

active_user_age = TimeDeltaField(

|

||||

_('Max. Active user age'),

|

||||

[DataRequired()],

|

||||

description=_('Time since login after which a user is not considered active anymore'),

|

||||

units=('minutes', 'hours', 'days')

|

||||

)

|

||||

|

||||

|

||||

class PrometheusPlugin(IndicoPlugin):

|

||||

"""Prometheus

|

||||

|

||||

Provides a metrics endpoint which can be queried by Prometheus

|

||||

"""

|

||||

|

||||

configurable = True

|

||||

settings_form = PluginSettingsForm

|

||||

default_settings = {

|

||||

'enabled': False,

|

||||

'global_cache_ttl': timedelta(minutes=5),

|

||||

'heavy_cache_ttl': timedelta(minutes=30),

|

||||

'token': '',

|

||||

'active_user_age': timedelta(hours=48)

|

||||

}

|

||||

settings_converters = {

|

||||

'global_cache_ttl': TimedeltaConverter,

|

||||

'heavy_cache_ttl': TimedeltaConverter,

|

||||

'active_user_age': TimedeltaConverter

|

||||

}

|

||||

|

||||

def get_blueprints(self):

|

||||

return blueprint

|

||||

117

prometheus/indico_prometheus/queries.py

Normal file

117

prometheus/indico_prometheus/queries.py

Normal file

@ -0,0 +1,117 @@

|

||||

# This file is part of the Indico plugins.

|

||||

# Copyright (C) 2002 - 2023 CERN

|

||||

#

|

||||

# The Indico plugins are free software; you can redistribute

|

||||

# them and/or modify them under the terms of the MIT License;

|

||||

# see the LICENSE file for more details.

|

||||

|

||||

from indico.core.db import db

|

||||

from indico.core.db.sqlalchemy.links import LinkType

|

||||

from indico.modules.attachments.models.attachments import Attachment

|

||||

from indico.modules.attachments.models.folders import AttachmentFolder

|

||||

from indico.modules.events.contributions import Contribution

|

||||

from indico.modules.events.contributions.models.subcontributions import SubContribution

|

||||

from indico.modules.events.models.events import Event

|

||||

from indico.modules.events.notes.models.notes import EventNote

|

||||

from indico.modules.events.sessions import Session

|

||||

|

||||

|

||||

def get_note_query():

|

||||

"""Build an ORM query which gets all notes."""

|

||||

contrib_event = db.aliased(Event)

|

||||

contrib_session = db.aliased(Session)

|

||||

subcontrib_contrib = db.aliased(Contribution)

|

||||

subcontrib_session = db.aliased(Session)

|

||||

subcontrib_event = db.aliased(Event)

|

||||

session_event = db.aliased(Event)

|

||||

|

||||

note_filter = db.and_(

|

||||

~EventNote.is_deleted,

|

||||

db.or_(

|

||||

EventNote.link_type != LinkType.event,

|

||||

~Event.is_deleted

|

||||

),

|

||||

db.or_(

|

||||

EventNote.link_type != LinkType.contribution,

|

||||

~Contribution.is_deleted & ~contrib_event.is_deleted

|

||||

),

|

||||

db.or_(

|

||||

EventNote.link_type != LinkType.subcontribution,

|

||||

db.and_(

|

||||

~SubContribution.is_deleted,

|

||||

~subcontrib_contrib.is_deleted,

|

||||

~subcontrib_event.is_deleted,

|

||||

)

|

||||

),

|

||||

db.or_(

|

||||

EventNote.link_type != LinkType.session,

|

||||

~Session.is_deleted & ~session_event.is_deleted

|

||||

)

|

||||

)

|

||||

|

||||

return (

|

||||

EventNote.query

|

||||

.outerjoin(EventNote.linked_event)

|

||||

.outerjoin(EventNote.contribution)

|

||||

.outerjoin(Contribution.event.of_type(contrib_event))

|

||||

.outerjoin(Contribution.session.of_type(contrib_session))

|

||||

.outerjoin(EventNote.subcontribution)

|

||||

.outerjoin(SubContribution.contribution.of_type(subcontrib_contrib))

|

||||

.outerjoin(subcontrib_contrib.event.of_type(subcontrib_event))

|

||||

.outerjoin(subcontrib_contrib.session.of_type(subcontrib_session))

|

||||

.outerjoin(EventNote.session)

|

||||

.outerjoin(Session.event.of_type(session_event))

|

||||

.filter(note_filter)

|

||||

)

|

||||

|

||||

|

||||

def get_attachment_query():

|

||||

"""Build an ORM query which gets all attachments."""

|

||||

contrib_event = db.aliased(Event)

|

||||

contrib_session = db.aliased(Session)

|

||||

subcontrib_contrib = db.aliased(Contribution)

|

||||

subcontrib_session = db.aliased(Session)

|

||||

subcontrib_event = db.aliased(Event)

|

||||

session_event = db.aliased(Event)

|

||||

|

||||

attachment_filter = db.and_(

|

||||

~Attachment.is_deleted,

|

||||

~AttachmentFolder.is_deleted,

|

||||

db.or_(

|

||||

AttachmentFolder.link_type != LinkType.event,

|

||||

~Event.is_deleted,

|

||||

),

|

||||

db.or_(

|

||||

AttachmentFolder.link_type != LinkType.contribution,

|

||||

~Contribution.is_deleted & ~contrib_event.is_deleted

|

||||

),

|

||||

db.or_(

|

||||

AttachmentFolder.link_type != LinkType.subcontribution,

|

||||

db.and_(

|

||||

~SubContribution.is_deleted,

|

||||

~subcontrib_contrib.is_deleted,

|

||||

~subcontrib_event.is_deleted

|

||||

)

|

||||

),

|

||||

db.or_(

|

||||

AttachmentFolder.link_type != LinkType.session,

|

||||

~Session.is_deleted & ~session_event.is_deleted

|

||||

)

|

||||

)

|

||||

|

||||

return (

|

||||

Attachment.query

|

||||

.join(Attachment.folder)

|

||||

.outerjoin(AttachmentFolder.linked_event)

|

||||

.outerjoin(AttachmentFolder.contribution)

|

||||

.outerjoin(Contribution.event.of_type(contrib_event))

|

||||

.outerjoin(Contribution.session.of_type(contrib_session))

|

||||

.outerjoin(AttachmentFolder.subcontribution)

|

||||

.outerjoin(SubContribution.contribution.of_type(subcontrib_contrib))

|

||||

.outerjoin(subcontrib_contrib.event.of_type(subcontrib_event))

|

||||

.outerjoin(subcontrib_contrib.session.of_type(subcontrib_session))

|

||||

.outerjoin(AttachmentFolder.session)

|

||||

.outerjoin(Session.event.of_type(session_event))

|

||||

.filter(attachment_filter)

|

||||

.filter(AttachmentFolder.link_type != LinkType.category)

|

||||

)

|

||||

16

prometheus/pytest.ini

Normal file

16

prometheus/pytest.ini

Normal file

@ -0,0 +1,16 @@

|

||||

[pytest]

|

||||

; more verbose summary (include skip/fail/error/warning), coverage

|

||||

addopts = -rsfEw --cov . --cov-report html --no-cov-on-fail

|

||||

; only check for tests in suffixed files

|

||||

python_files = *_test.py

|

||||

; we need the prometheus plugin to be loaded

|

||||

indico_plugins = livesync prometheus

|

||||

; fail if there are warnings, but ignore ones that are likely just noise

|

||||

filterwarnings =

|

||||

error

|

||||

ignore:.*_app_ctx_stack.*:DeprecationWarning

|

||||

ignore::sqlalchemy.exc.SAWarning

|

||||

ignore::UserWarning

|

||||

ignore:Creating a LegacyVersion has been deprecated:DeprecationWarning

|

||||

; use redis-server from $PATH

|

||||

redis_exec = redis-server

|

||||

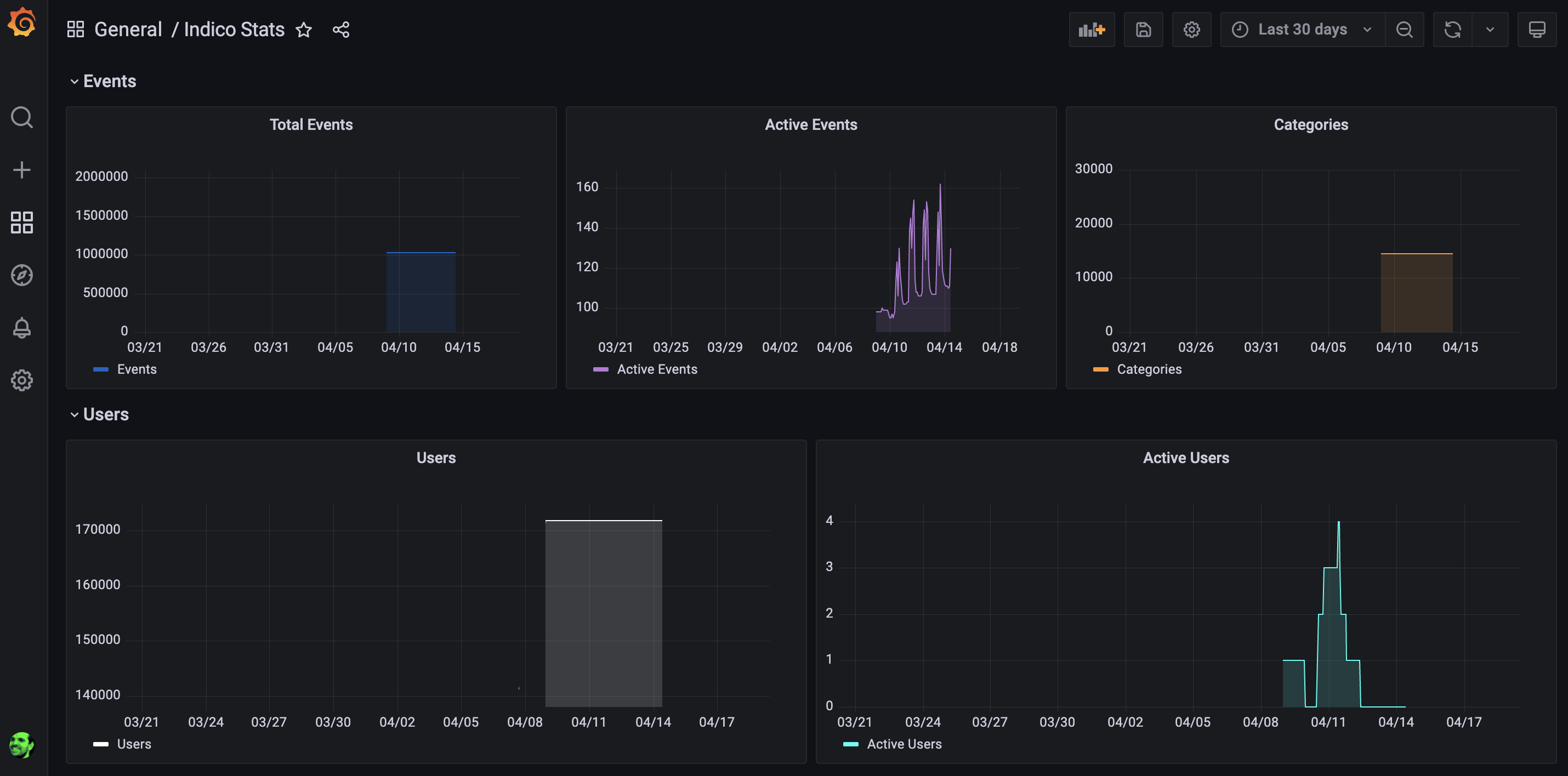

BIN

prometheus/screenshot.png

Normal file

BIN

prometheus/screenshot.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 307 KiB |

32

prometheus/setup.cfg

Normal file

32

prometheus/setup.cfg

Normal file

@ -0,0 +1,32 @@

|

||||

[metadata]

|

||||

name = indico-plugin-prometheus

|

||||

version = 3.2

|

||||

description = Prometheus metrics in Indico servers

|

||||

long_description = file: README.md

|

||||

long_description_content_type = text/markdown; charset=UTF-8; variant=GFM

|

||||

url = https://github.com/indico/indico-plugins

|

||||

license = MIT

|

||||

author = Indico Team

|

||||

author_email = indico-team@cern.ch

|

||||

classifiers =

|

||||

Environment :: Plugins

|

||||

Environment :: Web Environment

|

||||

License :: OSI Approved :: MIT License

|

||||

Programming Language :: Python :: 3.9

|

||||

Programming Language :: Python :: 3.10

|

||||

|

||||

[options]

|

||||

packages = find:

|

||||

zip_safe = false

|

||||

include_package_data = true

|

||||

python_requires = >=3.9.0, <3.11

|

||||

install_requires =

|

||||

indico>=3.2

|

||||

prometheus-client==0.16.0

|

||||

|

||||

[options.entry_points]

|

||||

indico.plugins =

|

||||

prometheus = indico_prometheus.plugin:PrometheusPlugin

|

||||

|

||||

[pydocstyle]

|

||||

ignore = D100,D101,D102,D103,D104,D105,D107,D203,D213

|

||||

11

prometheus/setup.py

Normal file

11

prometheus/setup.py

Normal file

@ -0,0 +1,11 @@

|

||||

# This file is part of the Indico plugins.

|

||||

# Copyright (C) 2002 - 2023 CERN

|

||||

#

|

||||

# The Indico plugins are free software; you can redistribute

|

||||

# them and/or modify them under the terms of the MIT License;

|

||||

# see the LICENSE file for more details.

|

||||

|

||||

from setuptools import setup

|

||||

|

||||

|

||||

setup()

|

||||

95

prometheus/tests/endpoint_test.py

Normal file

95

prometheus/tests/endpoint_test.py

Normal file

@ -0,0 +1,95 @@

|

||||

# This file is part of the Indico plugins.

|

||||

# Copyright (C) 2002 - 2023 CERN

|

||||

#

|

||||

# The Indico plugins are free software; you can redistribute

|

||||

# them and/or modify them under the terms of the MIT License;

|

||||

# see the LICENSE file for more details.

|

||||

|

||||

import pytest

|

||||

from prometheus_client.parser import text_string_to_metric_families

|

||||

|

||||

from indico.core.plugins import plugin_engine

|

||||

|

||||

|

||||

@pytest.fixture

|

||||

def get_metrics(make_test_client):

|

||||

def _get_metrics(token=None, expect_status_code=200):

|

||||

client = make_test_client()

|

||||

resp = client.get('/metrics', headers=({'Authorization': f'Bearer {token}'} if token else {}))

|

||||

assert resp.status_code == expect_status_code

|

||||

|

||||

if resp.status_code == 200:

|

||||

return {

|

||||

metric.name: metric.samples[0].value

|

||||

for metric in text_string_to_metric_families(resp.data.decode('utf-8'))

|

||||

}, resp.headers

|

||||

else:

|

||||

return None

|

||||

return _get_metrics

|

||||

|

||||

|

||||

@pytest.fixture

|

||||

def enable_plugin():

|

||||

plugin_engine.get_plugin('prometheus').settings.set('enabled', True)

|

||||

|

||||

|

||||

@pytest.mark.usefixtures('db')

|

||||

def test_endpoint_disabled_by_default(get_metrics):

|

||||

get_metrics(expect_status_code=503)

|

||||

|

||||

|

||||

@pytest.mark.usefixtures('db', 'enable_plugin')

|

||||

def test_endpoint_works(get_metrics):

|

||||

get_metrics()

|

||||

|

||||

|

||||

@pytest.mark.usefixtures('db', 'enable_plugin')

|

||||

def test_endpoint_empty(get_metrics):

|

||||

|

||||

metrics, _ = get_metrics()

|

||||

|

||||

assert metrics['indico_num_users'] == 1.0

|

||||

assert metrics['indico_num_active_users'] == 0.0

|

||||

assert metrics['indico_num_events'] == 0.0

|

||||

assert metrics['indico_num_categories'] == 1.0

|

||||

assert metrics['indico_num_attachment_files'] == 0.0

|

||||

assert metrics['indico_num_active_attachment_files'] == 0.0

|

||||

|

||||

|

||||

@pytest.mark.usefixtures('db', 'enable_plugin')

|

||||

def test_endpoint_cached(get_metrics, create_event):

|

||||

metrics, headers = get_metrics()

|

||||

assert metrics['indico_num_events'] == 0.0

|

||||

assert headers['X-Cached'] == 'no'

|

||||

|

||||

# create an event

|

||||

create_event(title='Test event #1')

|

||||

|

||||

metrics, headers = get_metrics()

|

||||

|

||||

# cached information should show zero events

|

||||

assert metrics['indico_num_events'] == 0.0

|

||||

assert headers['X-Cached'] == 'yes'

|

||||

|

||||

|

||||

@pytest.mark.usefixtures('db', 'enable_plugin')

|

||||

def test_endpoint_returning_data(get_metrics, create_event):

|

||||

# create an event

|

||||

create_event(title='Test event #1')

|

||||

|

||||

metrics, _ = get_metrics()

|

||||

assert metrics['indico_num_users'] == 2.0

|

||||

assert metrics['indico_num_active_users'] == 0.0

|

||||

assert metrics['indico_num_events'] == 1.0

|

||||

assert metrics['indico_num_categories'] == 2.0

|

||||

assert metrics['indico_num_attachment_files'] == 0.0

|

||||

assert metrics['indico_num_active_attachment_files'] == 0.0

|

||||

|

||||

|

||||

@pytest.mark.usefixtures('db', 'enable_plugin')

|

||||

def test_endpoint_authentication(get_metrics):

|

||||

plugin_engine.get_plugin('prometheus').settings.set('token', 'schnitzel_with_naughty_rice')

|

||||

get_metrics(expect_status_code=401)

|

||||

|

||||

get_metrics(token='schnitzel_with_naughty_rice')

|

||||

get_metrics(token='spiritual_codfish', expect_status_code=401)

|

||||

Loading…

x

Reference in New Issue

Block a user